Paxos-based Sharded KV Storage System

Fault-tolerant distributed key-value store built for UW CSE 452 with exactly-once RPC, Viewstamped Replication, Multi-Paxos replication groups, and sharded transactions coordinated with 2PC and 2PL.

At a Glance

The Problem

The challenge was to build a storage system that remains correct under node failures, retries, and concurrent operations while scaling beyond a single replica group. A simple key-value server was not enough; the design needed fault tolerance, transactional coordination, and shard-aware consensus to preserve correctness.

System Architecture

Correctness Guarantees, Layered

The system builds correctness incrementally — each layer assumes the guarantee below it and adds exactly one new property. Exactly-once RPC eliminates duplicate mutations before any replication logic runs. Viewstamped Replication adds failover without data loss. Multi-Paxos provides shard-level consensus with a stable leader. Two-phase commit and locking tie it all together for cross-shard atomicity.

Client assigns each request a (clientId, seqNum) pair. The server maintains a per-client deduplication table so retried requests are identified and their cached result returned — never re-applied.

Primary-backup replication with view changes on failure. A new primary is elected from the replica set, state is reconciled, and the group resumes without data loss. Exactly-once semantics are preserved across view changes.

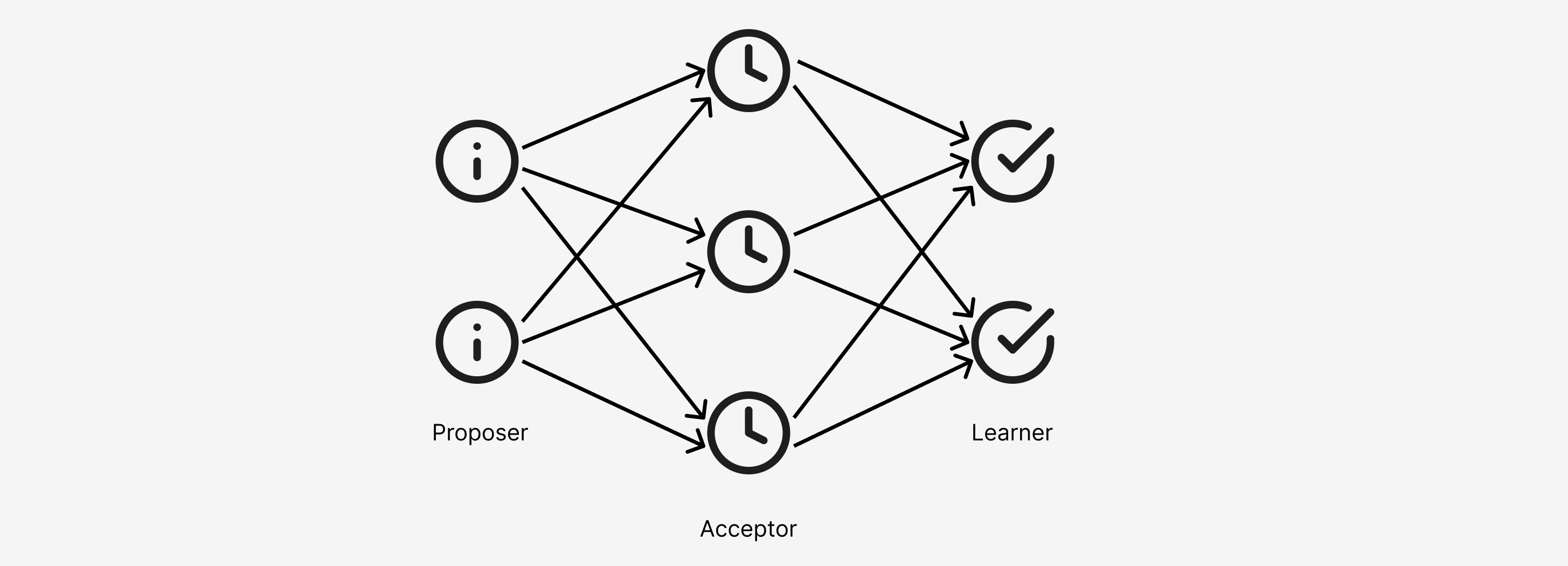

Each shard is backed by a dedicated Paxos replica group. A stable leader drives all proposals for its group; Phase 1 (Prepare) is skipped after leader election. Log-based state machine replication ensures all replicas converge to the same committed history.

Cross-shard operations coordinate through a two-phase commit protocol. Each shard-participant uses two-phase locking to prevent concurrent transactions from interleaving. The coordinator logs its decision before issuing Commit so crash recovery is deterministic.

Protocol Flows

Two core protocols drive correctness in this system. Paxos consensus handles single-shard replication — the leader drives prepare and accept rounds to commit each log entry. Two-phase commit coordinates cross-shard transactions, with 2PL ensuring participants hold locks through the commit decision. Click any step to inspect the protocol message.

Key Decisions

- 1

Implemented exactly-once RPC first so all higher-level replication logic could assume idempotent client semantics.

- 2

Used Viewstamped Replication for the primary-backup stage to keep failover logic explicit before introducing full Paxos groups.

- 3

Combined Multi-Paxos replica groups with shard ownership to avoid a single consensus domain becoming the bottleneck.

- 4

Applied 2PC and 2PL for cross-shard transactions, trading latency for correctness and easier reasoning about consistency.

Outcomes

- Delivered a working sharded KV store for CSE 452 with replication, transactions, and fault-tolerance mechanisms implemented end-to-end.

- Demonstrated strong consistency across distributed nodes in a course project setting modeled after production distributed databases.

- Built reusable understanding of consensus, leader failover, and distributed transaction tradeoffs for later systems design work.

Lessons Learned

- 1Exactly-once semantics are foundational — retry behavior becomes chaos if deduplication is not designed early.

- 2Consensus solves agreement, not end-to-end system design; transaction coordination and locking still dominate complexity.

- 3Sharding helps scalability, but cross-shard correctness reintroduces coordination costs very quickly.

- 4Course projects are an excellent place to feel the operational pain of distributed systems before facing it in production.