YANA - Clubhouse-like Voice Chatroom

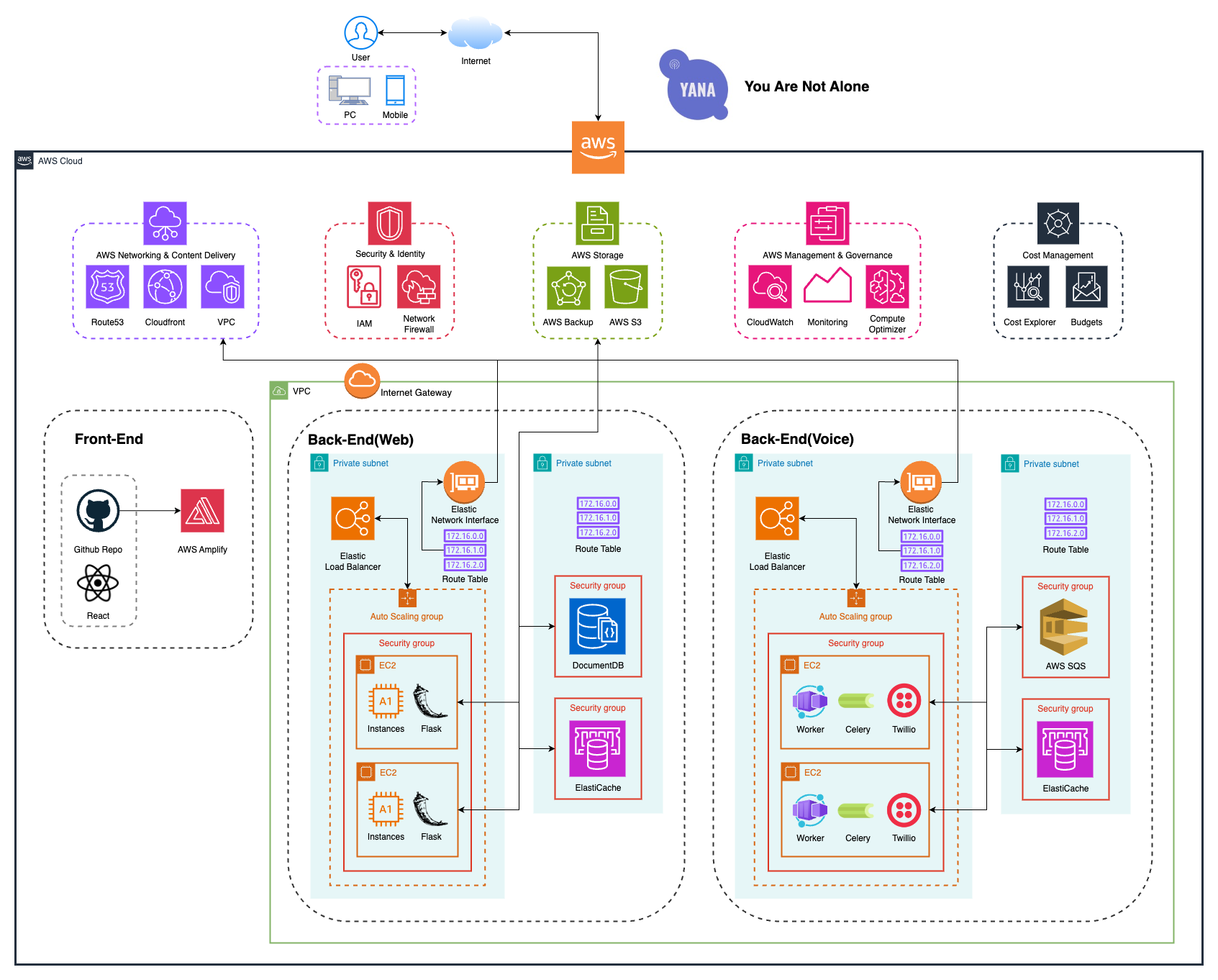

Cloud-based voice and text chat platform built during the pandemic with React, Flask, Twilio, EC2, SQS, Celery, and AWS networking primitives for scalable room-based communication.

At a Glance

The Problem

The goal was to create a room-based communication platform that felt immediate and social while remaining deployable on commodity cloud infrastructure. Real-time voice, text messaging, and asynchronous background work all had to coexist without overloading the synchronous web path.

Cloud Architecture

Sync vs. Async: Two Paths Through the System

The web service handles text messaging synchronously — authenticate, persist, read presence, broadcast, return. Voice setup follows an asynchronous path: the HTTP response returns immediately after enqueueing an SQS task, and the Celery worker delivers the Twilio access token to the client over the existing Socket.IO connection once it's ready. This separation keeps the synchronous path fast and prevents third-party API latency from blocking every room join.

SynchronousText messaging

- 1HTTP request arrivesFlask handles auth, validates input

- 2Persist to DocumentDBRoom state, message history

- 3Read presence from ElastiCacheRoom membership, Socket.IO session routing

- 4Broadcast via Socket.IOReal-time event to room members

- 5Return 200 to callerSynchronous path complete

AsynchronousVoice setup

- 1Enqueue task to SQSCelery task message serialized and sent

- 2HTTP responds immediatelyCaller does not wait for task completion

- 3Celery worker picks up taskAt-least-once delivery from SQS

- 4Call Voice ServiceGenerate Twilio access token, create TwiML room

- 5Deliver result via Socket.IOToken pushed to client over existing WS connection

Request Flows

Two flows illustrate how synchronous and asynchronous paths coexist. Joining a voice room traces the full async path — from the initial HTTP join through SQS task dispatch, Celery worker execution, Twilio token generation, and Socket.IO callback delivery. Sending a text message takes the fast synchronous path through DocumentDB and Socket.IO broadcast. Click any step to inspect the service interaction.

Key Decisions

- 1

Separated web and voice services so scaling and failure characteristics for chat versus voice traffic could evolve independently.

- 2

Used Celery workers with SQS to offload asynchronous work instead of forcing all event handling through synchronous app servers.

- 3

Put backend services in private subnets behind load balancers to keep the public attack surface narrow.

- 4

Combined managed AWS primitives with application-level real-time tooling to keep infrastructure understandable for a small team.

Outcomes

- Delivered a functioning room-based platform for real-time voice and text communication during the COVID era.

- Created a modular cloud architecture that could scale the web tier, voice tier, and worker tier independently.

- Improved responsiveness by moving call setup and background processing into asynchronous worker flows.

Lessons Learned

- 1Real-time products become distributed systems very quickly — queues, workers, and failure isolation matter even at modest scale.

- 2Voice features are operationally different from standard web requests, so isolating them pays off.

- 3Security groups and private networking are easy to skip in prototypes, but they become essential as soon as you expose user communication.

- 4A small amount of asynchronous architecture goes a long way when the alternative is blocking on third-party APIs.